Redefining Cloud Security & Compliance with Generative AI

Accelerated enterprise security resolution and compliance readiness through verifiable AI safeguards.

Some artifacts have been changed or omitted to comply with NDA.

IMPACT

As UX Lead for Google Cloud’s Security, Privacy, and Compliance (SPC) portfolio, my objective was to use generative AI to meaningfully transform complex, high-friction workflows.

THE CHALLENGE

Supercharging high-stakes security workflows with generative AI

Security, Privacy, and Compliance is a vast, horizontal that underpins the entire Google Cloud ecosystem, spanning everything from identity and data protection to security operations and compliance management. Workflows touch all customer and user roles from start ups to highly regulated global enterprises. When it comes to security, getting AI features right isn't optional. And in for companies in regulated industries, the risks are significantly larger. A single AI error could trigger failed external audits, large financial penalties, and compromised operating licenses.

In the wake of rapid AI advancements, I drove product strategy and cross-functional alignment to take multiple GenAI features from inception to launch in just 4 months, working directly with the core Gemini Cloud Assist team to evolve the platform alongside the features.

SCOPING & DISCOVERY

Breaking product silos by shifting the org to JTBD level thinking to expose critical cross-product friction.

Under tight deadlines for Cloud Next, the initiative lacked a cohesive scope. Multiple siloed product teams were brainstorming disjointed AI capabilities within their existing product boundaries with no PRDs. However, real user workflows in this domain frequently cross product lines.

To prevent a fragmented experience, I scoped the work into two tracks aligned to core Jobs-to-be-Done (JTBD) across the security lifecycle based on existing user research:

Administrators

Managing security and compliance in the cloud — planning and implementing platform controls, mapping regulatory requirements to cloud controls, and monitoring compliance posture.

Developers & DevOps

Designing, building, and deploying secure and compliant applications across the software development lifecycle.

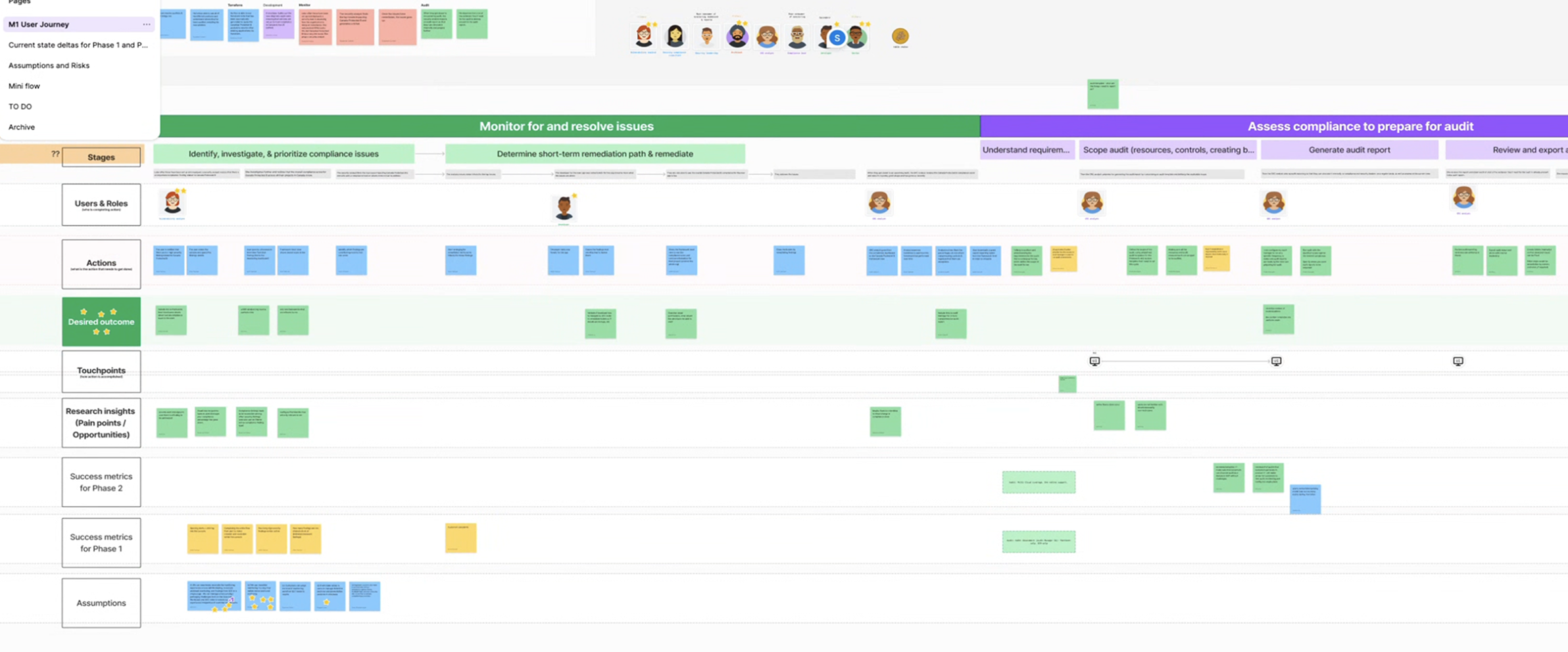

I ran service blueprinting sessions with PM and UXR to map the end-to-end journey for each JTBD.

With agentic AI, I wanted to push our team to think bigger:

Service blueprint aligned to JTBD across the security

lifecycle

Service blueprint aligned to JTBD across the security

lifecycle

Because enterprise cloud environments are not one-size-fits-all, my designs needed to account for the varying levels of security expertise, architectural complexity, and organizational risk tolerance.

PRIORITIZATION

I drove AI prioritization to determine the right problems to solve.

As the team's AI subject matter expert, I partnered with cross-functional stakeholders to navigate what our LLM platform could realistically achieve and what problems should be solved with generative AI. To help my team answer the question, "Is it a good problem for AI to solve today?", I led product and engineering to evaluate AI suitability against user impact to identify the most impactful user problems to pursue for both tracks.

Through these exercises, I steered the organization to narrow our focus to two product North Stars, anchoring the work in measurable success metrics:

An administrator can set up their compliance posture in a single sitting, without referring to external documentation.

A non-specialist developer can resolve a security finding without escalating to a compliance team.

This alignment directly influenced how engineering teams approached their agent models, secured executive buy-in and transformed fragmented efforts into a single, unified roadmap.

DESIGN PRINCIPLES

I defined foundational UX principles to establish a shared direction for generative AI experiences across SPC.

Grounded in foundational user research across SPC and AI initiatives in Cloud, I defined five UX principles to guide all GenAI design decisions across the portfolio. The central goal: democratize SPC expertise to help users move from understand to action.

Build trust through education and transparency

Guide users with transparent explanations and rationale, breaking down jargon and minimizing the need for prior cloud controls knowledge.

Meet users where they are

Insights and recommendations should be contextual — surfaced at the moment they're relevant, not in a separate tool.

Keep users in control

"Help me do it but let me verify." Streamline effort through intelligent defaults while keeping humans in the loop.

Democratize security expertise

Make complex security, privacy, and compliance concepts accessible to users of all technical levels.

Anticipate needs and surface gaps

Proactively identify and recommend solutions or best practices users wouldn't otherwise discover.

PROTOTYPING & TESTING

Bridging the gap between agentic capability and user trust.

To translate our two North Stars into execution, I shaped the final agentic experiences through cycles of rapid prototyping and user validation. I partnered closely with engineering and Gemini Cloud Assist platform partners throughout to understand our fast-evolving AI stack and model development, and Figma to explore GenAI interaction patterns.

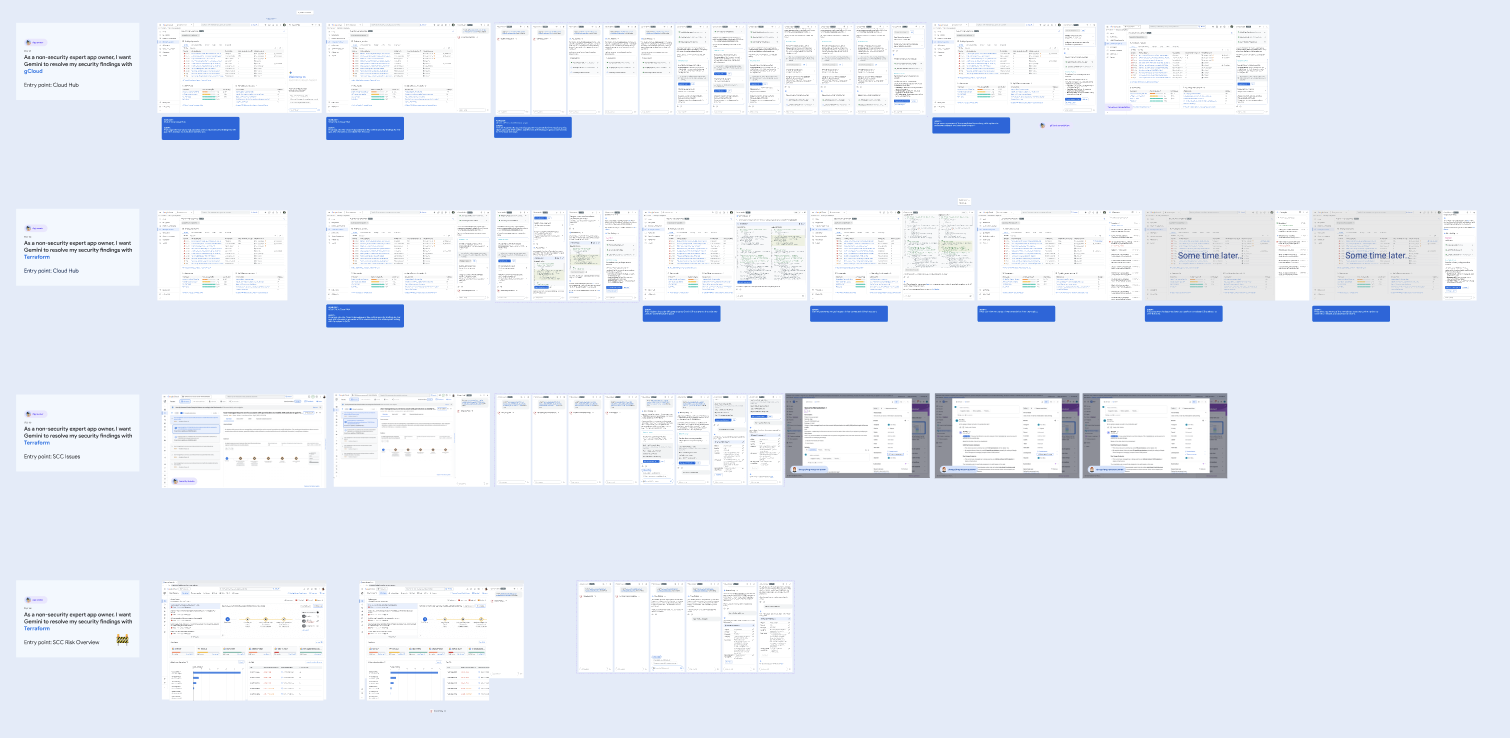

Exploratory agentic interaction flows

Exploratory agentic interaction flows

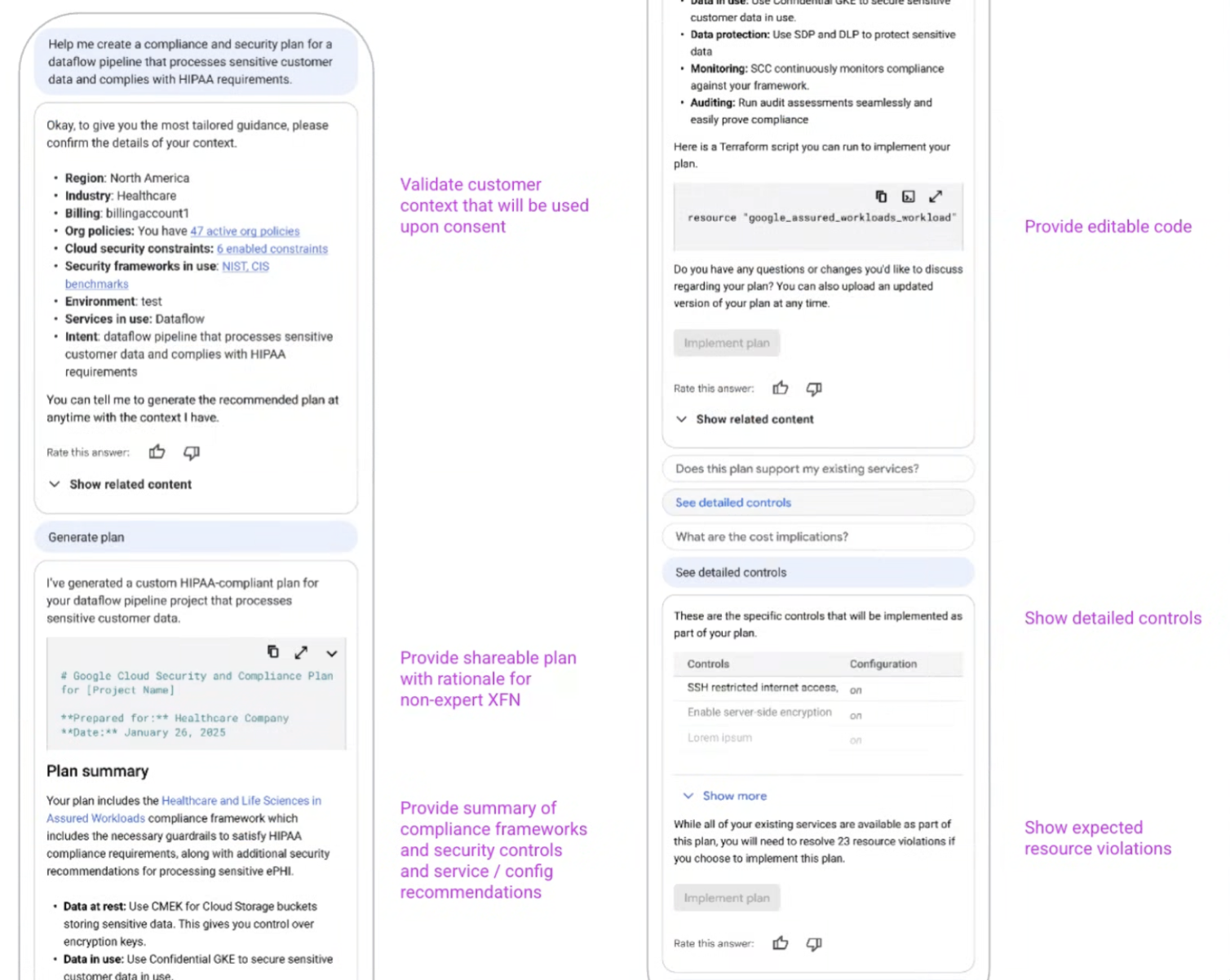

Compliance plan designs

Reducing compliance setup in Google Cloud from months to minutes

The problem: Mapping regulatory requirements to GCP cloud controls is a process that takes months to a year and requires specialist knowledge most teams don't have. Many large enterprises still do this in spreadsheets. 🤯

Information overload from a complex, changing landscape makes it hard to understand requirements, classify existing infrastructure, and translate needs for non-technical stakeholders.

Manually mapping regulatory and internal policies to GCP solutions is confusing.

Translating requirements into concrete configurations is tedious and error-prone with no tooling to guide the proces, requiring specialist expertise most teams don't have in-house.

Manual configuration creates downstream risk. Misconfigured services create real security exposure and audit liability.

"Due to compliance, implementation took us 1.5 years."

"I want to see how it creates and comes up with a plan for the data pipeline."

My primary goal with the first iteration was to validate the information users needed to confidently make informed decisions and take action.

Initial compliance plan prototype

Initial compliance plan prototype

Research insights: While users liked the concept in theory, we uncovered critical user needs for it to be a viable solution.

Users wanted autonomy but needed to verify.

Users need the ability to verify the rationale behind recommendations before anything is applied to earn trust at each step.

No single person approves a compliance plan.

Downloading the plan for offline discussion was a hard requirement to review with multiple stakeholders.

Terraform is non-negotiable for enterprise DevOps.

Enterprise teams deploy through IaC, so implementing final recommendations as Terraform configurations was the only way to fit into how they actually work at scale.

Design solution: To bridge this trust gap, instead of scaling back agentic abilities, I introduced checkpoints, dry run, and rollback capabilities to the experience to include verifiable AI safeguards that encouraged user trust in agentic experiences while keeping users in control. These guardrails allowed the user to safely test the agent’s actions without security risks, building trust by proving to operators that they remained in full control.

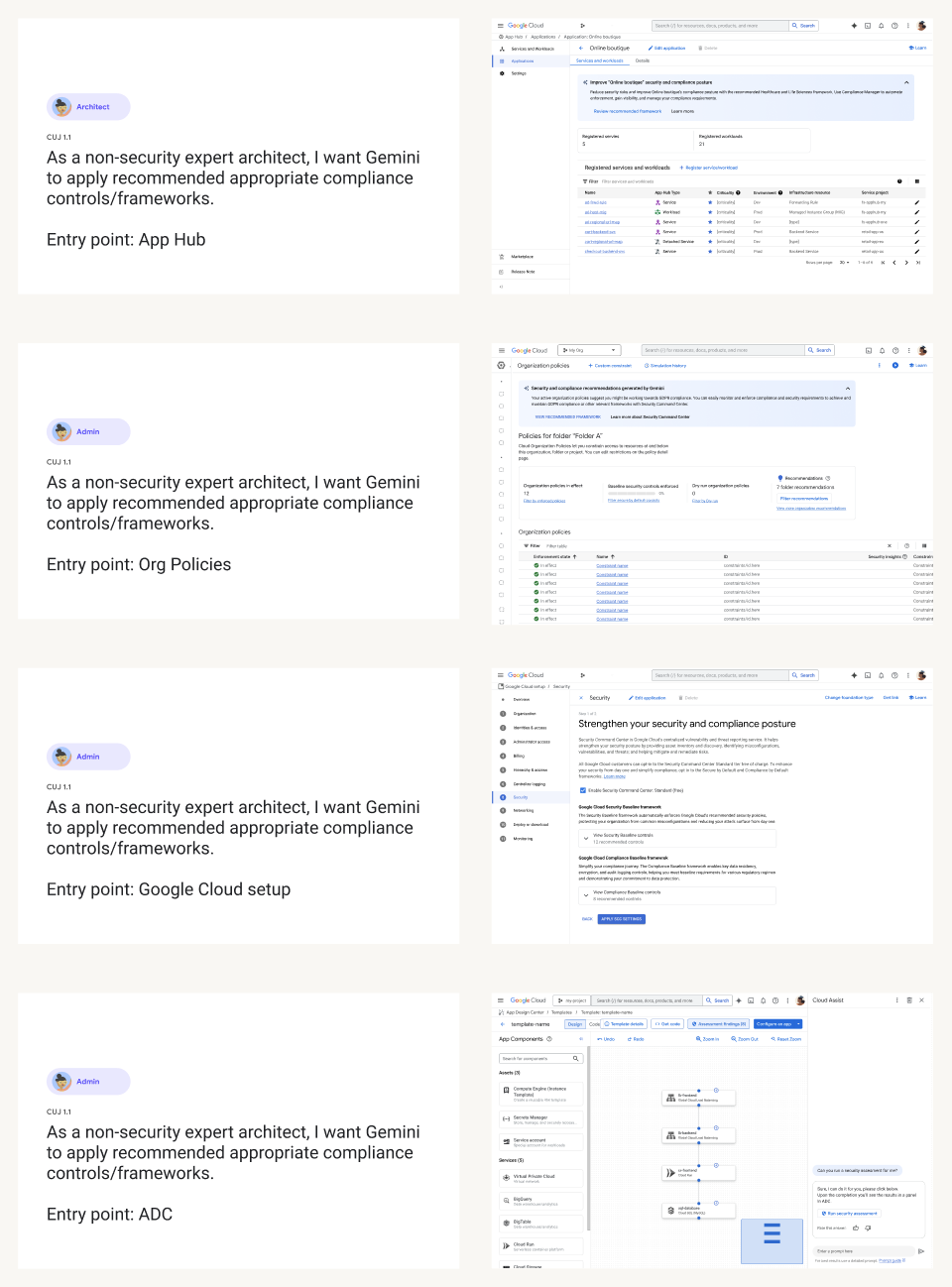

Meeting users where they are

Compliance needs don't surface when someone navigates to a compliance dashboard but rather while planning and configuring a workload, or when a finding appears in Cloud Hub. I coordinated across external product teams to embed high-impact entry points into their surfaces to meet users at moments of highest intent.

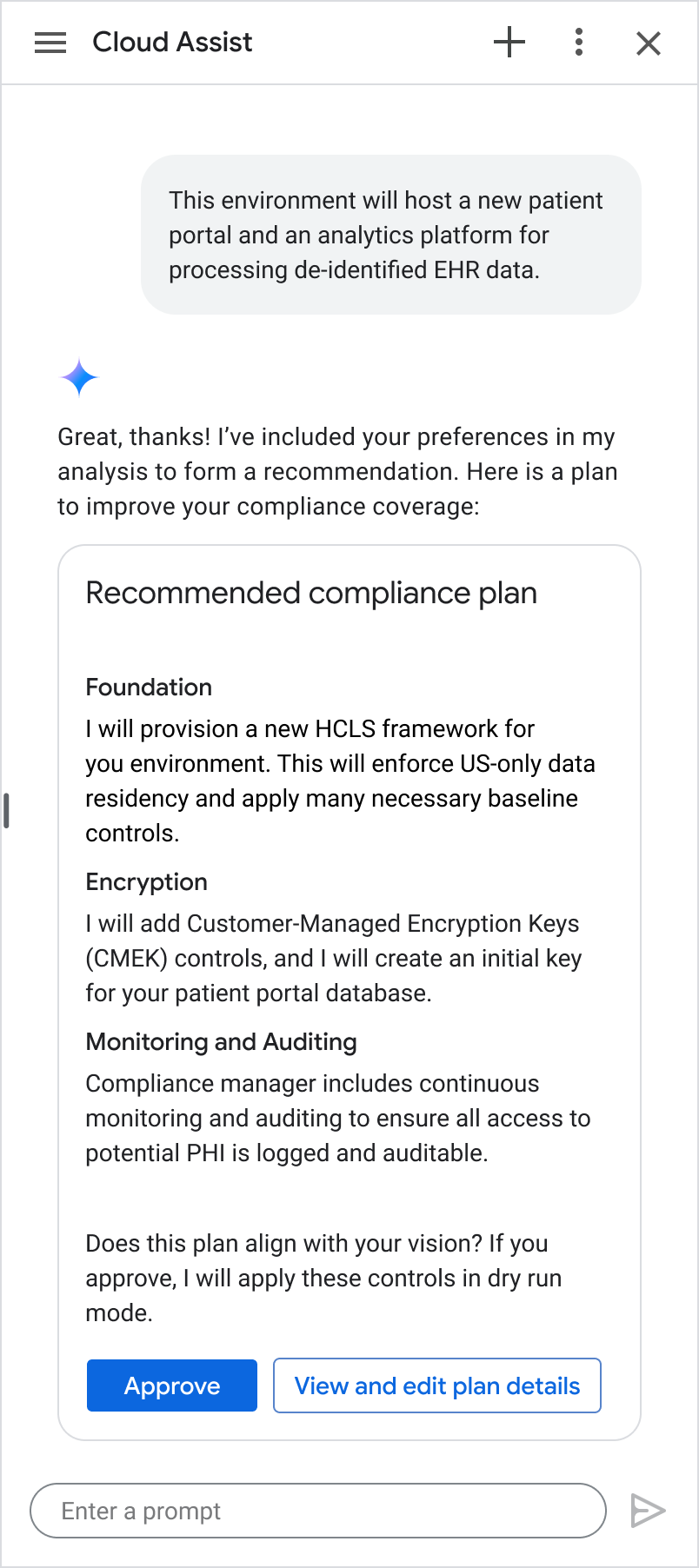

Surfacing personalized recommendations

Gemini generates a personalized compliance plan based on the user's industry, region, environment, and stated requirements. I designed a multi-step plan component with progressive disclosure, allowing users to see Gemini's implementation strategy in plain language.

Users can review with stakeholders and edit before anything is applied.

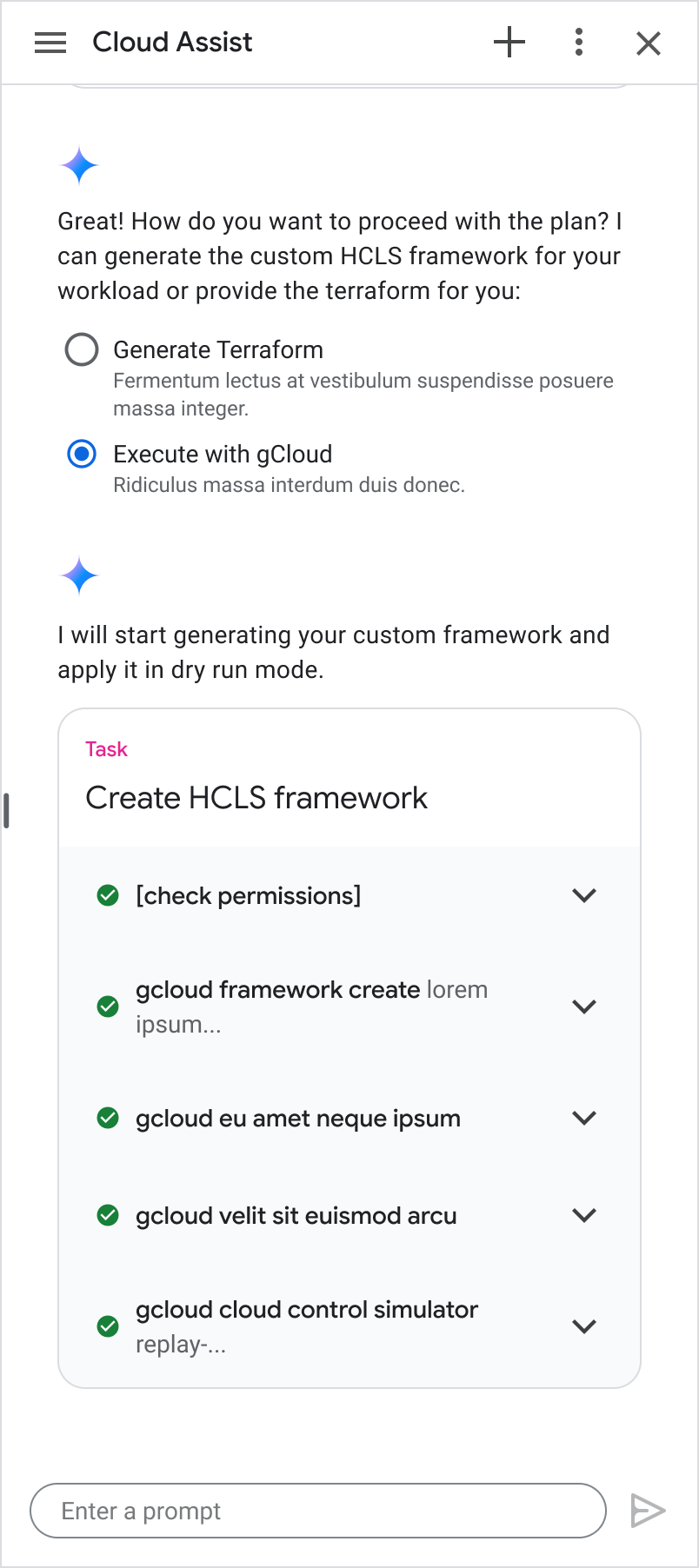

Translating the plan into configurable steps

Users can choose to configure via Terraform or gcloud. I designed a Multi-task Execution component that brings users along every step as each action executes. This component was later contributed to the core Gemini Cloud Assist platform.

Security Finding Remediation Designs

Automating security remediation in developer workflows

The problem: Remediating a security finding is rarely a straightforward process that involves high-friction points across the journey:

Fragmented investigations — when an alert fires, gathering context requires jumping manually across multiple tools just to understand the blast radius.

Imprecise routing — for a security role who receives the finding, identifying the developer owner responsible is notoriously slow and cumbersome.

Researching fixes — DevOps engineers spend cycles researching compliance requirements to design a safe code change that won't break dependencies.

Broken audit loops — verifying a fix and closing the loop is a disjointed, manual process that frequently leaves compliance records incomplete.

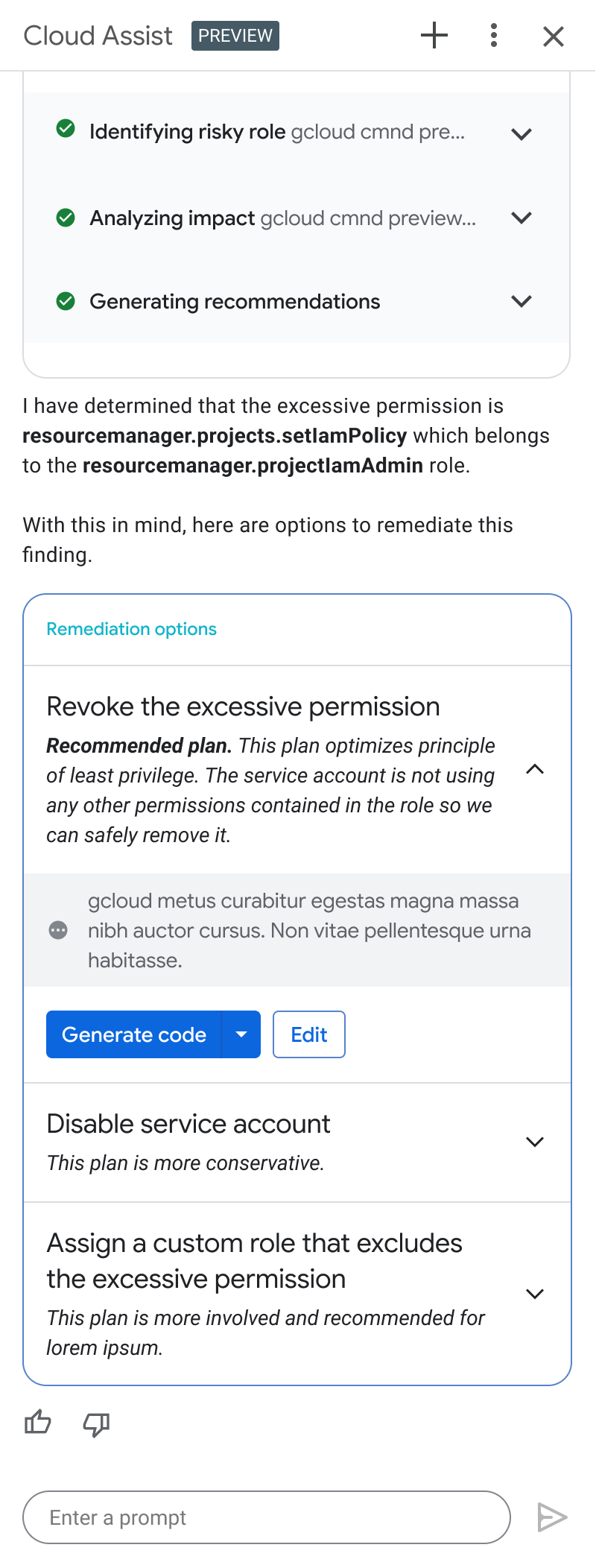

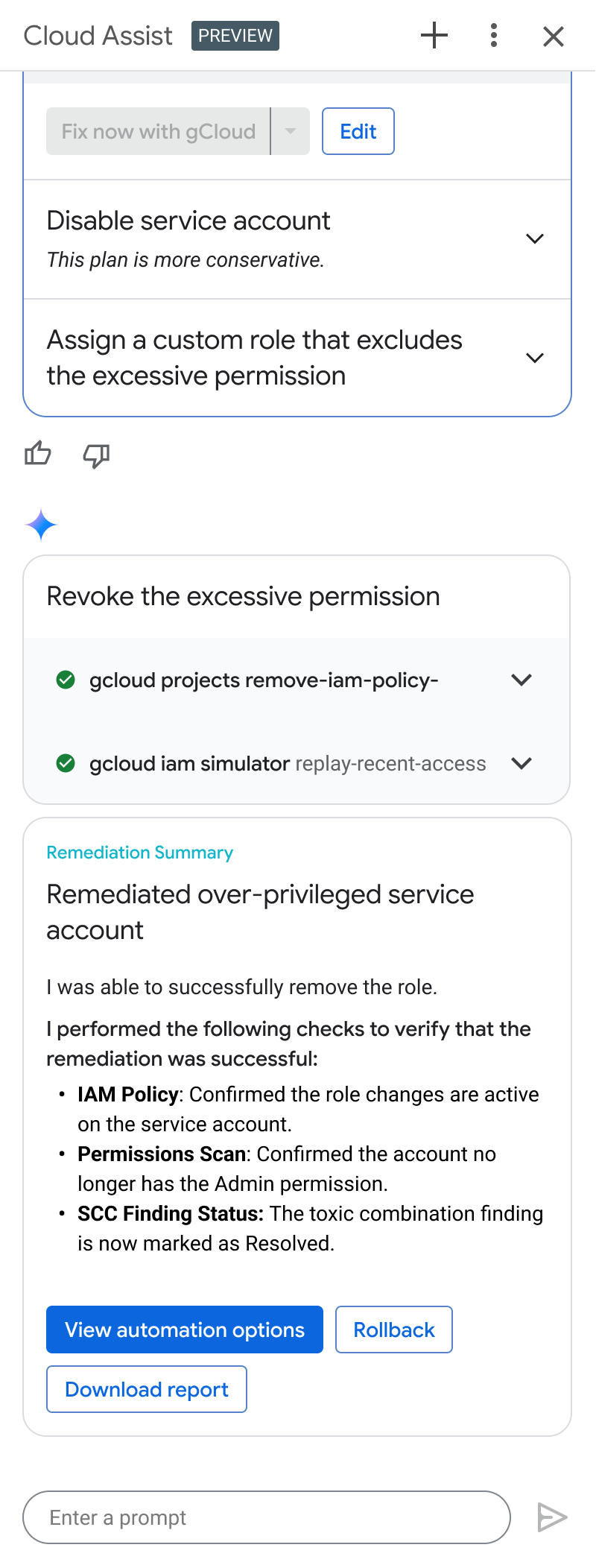

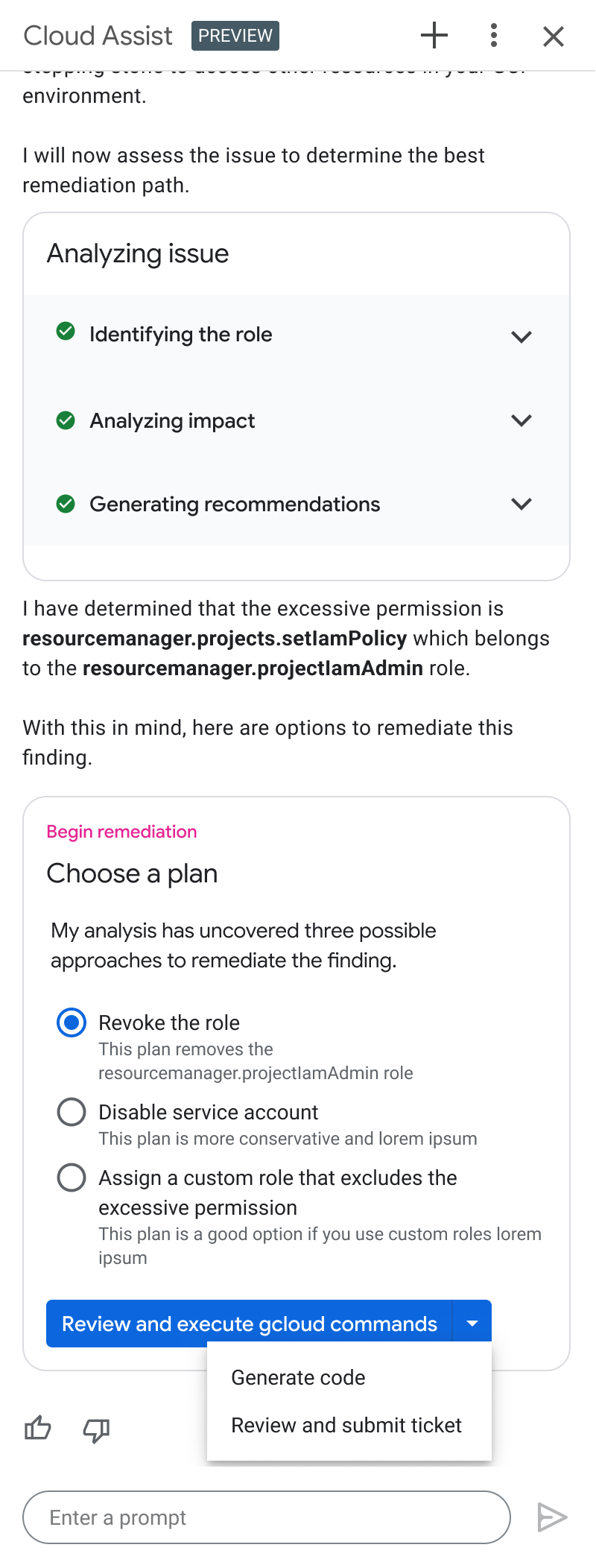

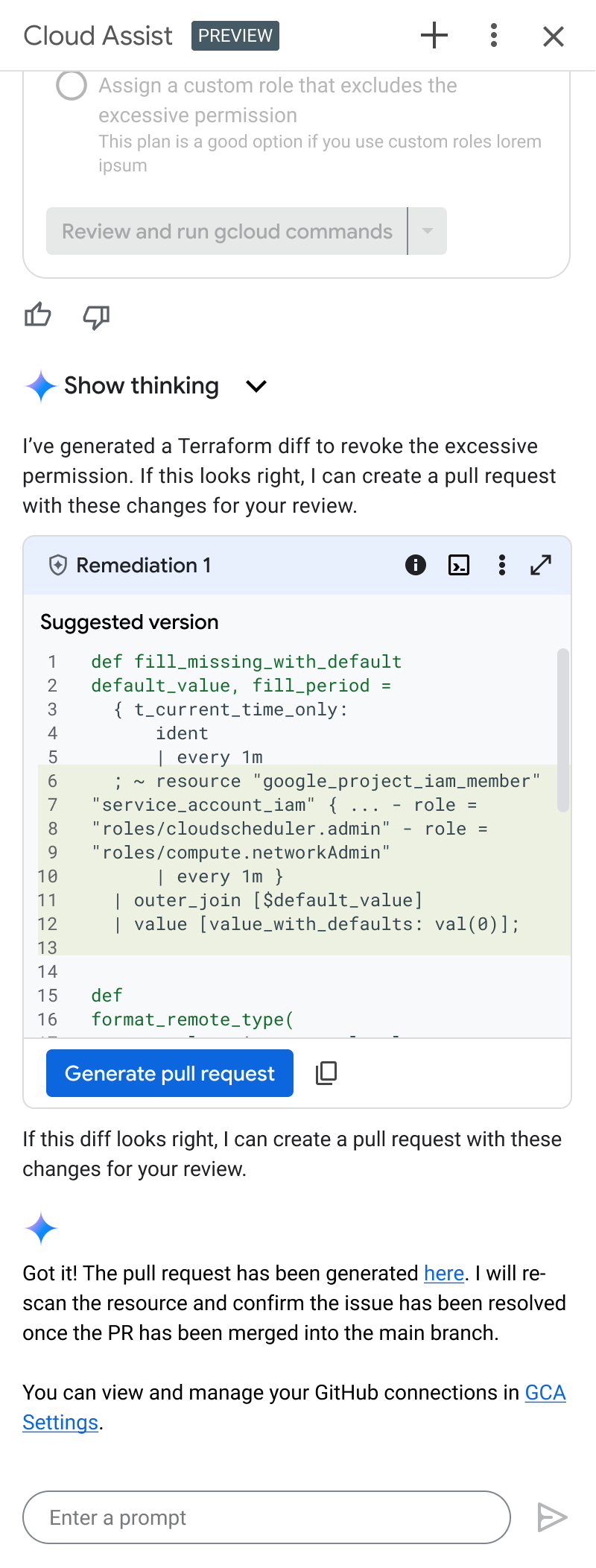

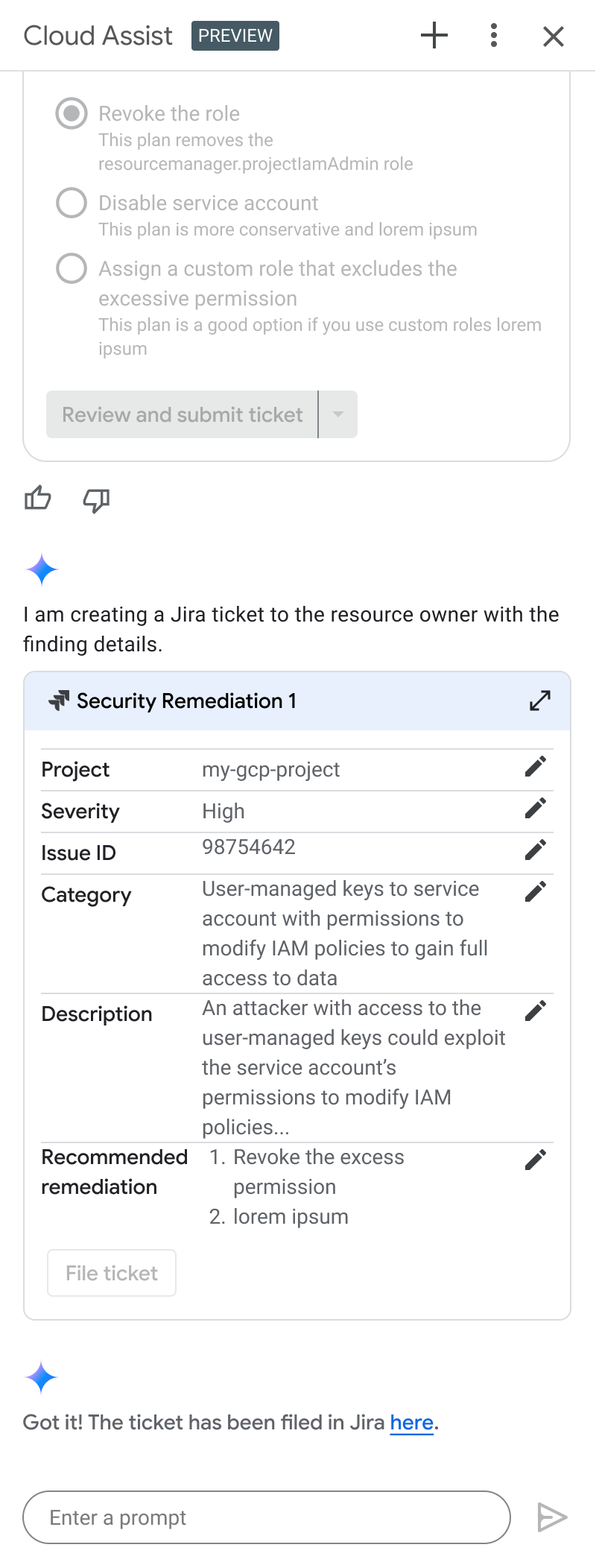

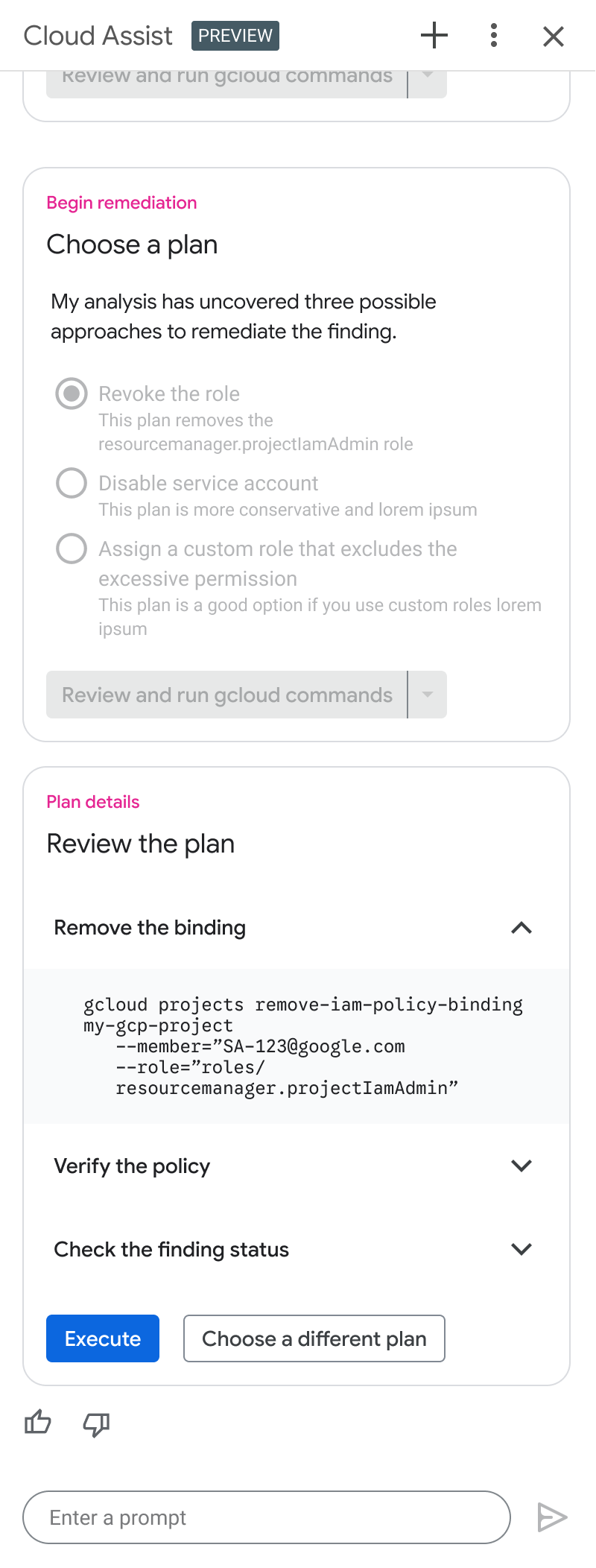

Design solution: I designed a multi-step agentic flow in the console chat platform, Gemini Cloud Assist, that remediates without requiring users to leave their workflow or consult a specialist.

1 - Finding Analysis

Gemini identifies the finding, explains the issue in plain language, and presents a recommended remediation along with alternatives.

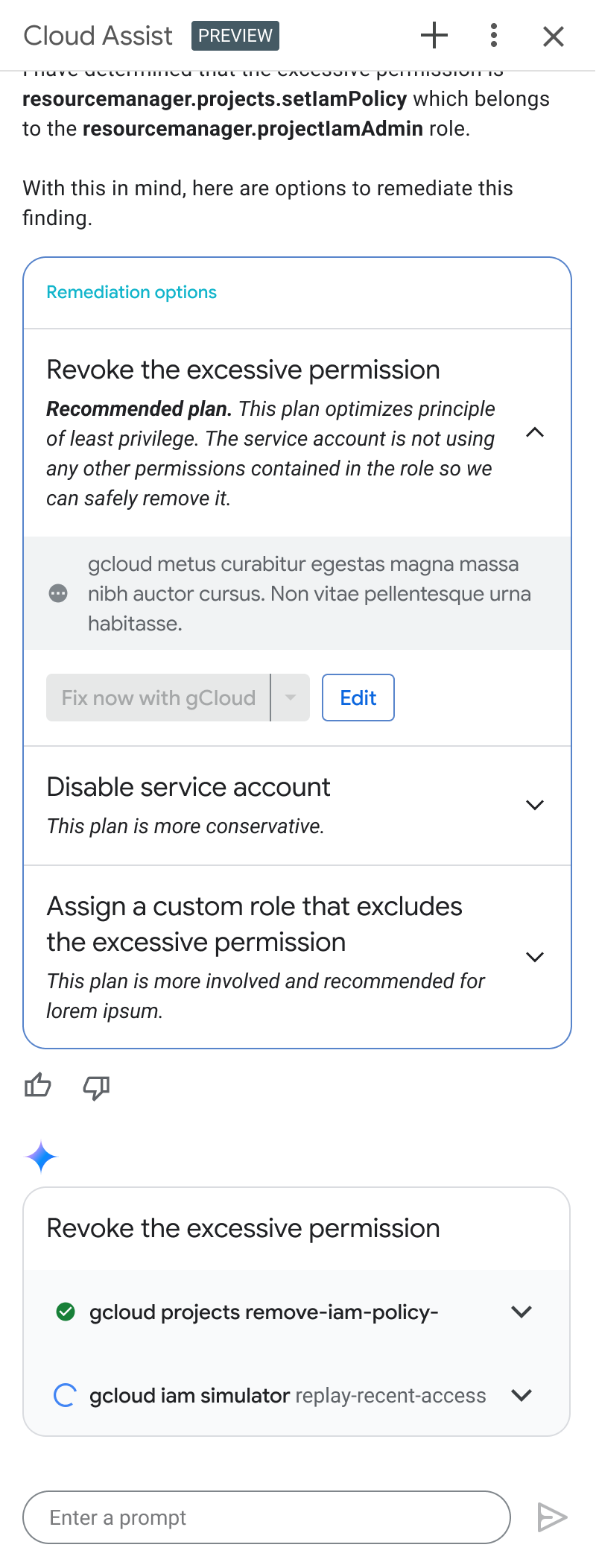

2 - Remediate

Once the developer selects an approach, Gemini executes the remediation — showing its thinking for each step.

3 - Verify

After the fix is merged, Gemini confirms the remediation was successful and provides a summary with rollback options if needed.

To accommodate the diverse enterprise CI/CD practices, I designed a modular remediation framework that allowed users to seamlessly route AI-generated fixes through their existing workflows via direct code generation, CLI execution, or automated ticketing.

Remediation options

Pull Request

Ticket

gCloud commands

SCALING & EVANGELISM

Shaping a platform in motion.

A significant part of this work involved embedding with the Cloud Assist Platform UX team to build new agentic interaction patterns to meet aggressive integration timelines for Gemini CLI capabilities. I contributed two patterns — multi-step planning and consecutive task completion — to the centralized component library, where they're now available across the platform.

OUTCOMES & IMPACT

Designing verifiable agentic experiences that accelerate enterprise adoption in high-risk environments.

The final designs were fully approved by executive leadership and actively being developed for launch.

A few highlights of my impact include:

Validating a 90% faster compliance workflow

By benchmarking our compliance prototypes against the existing manual spreadsheet process, we validated a projected 90% reduction in time-to-value for compliance admins.

Balancing agentic abilities with user trust

By adding verifiable safeguards, I mitigated the fear of production risk for users, reducing the barrier to adoption of AI solutions.

Bridging product boundaries

Rather than building siloed features, anchoring the org on end-to-end Jobs-to-be-Done gave disparate engineering teams a shared roadmap and unified the experience.

Evolving our agentic chat platform

It was fun to jam with the Cloud Assist Platform UX team to build new agentic interaction patterns. The Multi-step Plan and Multi-task Execution UI components I designed were formally contributed back to the central library.